Huawei Executive: Huawei-Led Chinese Domestic Chip Firms largely Overcome U.S. Compute Curbs, and China’s AI Models also Rival U.S.

Yesterday, Nvidia released its FY26 Q2 earnings. When talking about the H20 chip, the company said its data center compute revenue came in at $33.8 billion, up 50% year over year but down 1% from the prior quarter, mainly because H20 sales dropped by $4 billion. During the quarter, Nvidia released $180 million in reserves it had set aside for H20 inventory, since it managed to sell about $650 million worth of H20 to “non-restricted customers outside of China.” At the same time, Nvidia admitted it sold zero H20 chips inside China in Q2.

Looking ahead, Nvidia guided Q3 revenue to around $54 billion (±2%) , and this forecast is based on the assumption that it won’t be able to sell a single H20 chip in China. On the earnings call, Jensen Huang said that if Nvidia could unlock the China market with competitive products, it would represent a $50 billion business opportunity this year. The CFO added that if the H20 situation is resolved, Nvidia could bring in $2–5 billion in H20 sales in Q3, and potentially more if orders scale up further.

Jensen also spoke with Yahoo Finance after the earnings call, once again stressing the importance of the Chinese market to Nvidia:

"China has 50% of the AI researchers in the world. They have lots and lots of AI companies, and they have lots of AI chip companies. Without us there, they will obviously have their own alternatives. And they've been growing. And you've seen some of the AI chip companies had record years. Their stock prices at record high. You know, obviously there's a lot of opportunities there. If we have an opportunity to go compete for that market, it would be great for America, it would be great for China. And we have an opportunity to go capture some of that $50 billion that is growing at about 50% every year.

The second largest AI and computing market in the world. America should be part of that. It's great for exports. It's great for our treasury. It's great for American technology leadership. And it enables us to take that, to leverage that leadership all around the world. And so really important for just an incredible, incredible decision by President Trump. I'm very, very pleased with it. Very grateful for his decision. And we're going to do our best to go capture some of that market."

Reading the report, you can’t help but feel that booming AI demand and Nvidia’s unrivaled position in AI chips keep the company on a strong growth path. The only things that could slow it down now are geopolitics—and future competition from China’s homegrown chips.

Most of the discussion in China around Nvidia’s Q2 earnings has been full of admiration and optimism. But there are also voices zeroing in on the impact of not being able to sell the H20 chip to China, how that dragged down data center revenues and the stock price, and the longer-term geopolitical bind Nvidia is stuck in.

A well-known Chinese tech media outlet Zimubang (字母榜) put it this way:

Jensen Huang’s biggest challenge is how to navigate an external environment shaped by geopolitics. He has to keep explaining to policymakers in Washington that restricting trade with China could hurt the long-term competitiveness of U.S. companies—and might even help create strong local rivals. At the same time, he also faces scrutiny from China, where regulators and companies may reduce their reliance on Nvidia’s products because of supply uncertainty and instead throw their weight behind domestic alternatives.

IDC data shows that China’s intelligent computing power is expected to grow from 1,037.3 EFLOPS in 2025 to 2,781.9 EFLOPS in 2028, a compound annual growth rate of over 30%. If such a huge, fast-growing market manages to form its own closed-loop hardware and software ecosystem, its reliance on outside technology will permanently shrink.

By then, even if geopolitics ease and the U.S. relaxes export controls, Nvidia could find the door to China effectively shut. Customers would already be comfortable with local supply chains, developers would be locked into domestic software platforms, and the whole industry would have completed a “de-Nvidia-fication.” Meanwhile, national policies like the Three-Year Action Plan for New Data Center Development are actively shaping this new infrastructure—setting efficiency standards (like PUE) and promoting technologies such as liquid cooling—which only speeds up the creation and adoption of local tech standards.

Meanwhile, despite U.S. restrictions, the progress of China’s domestic computing power has been impressive. A Financial Times report just highlighted some of the latest progress in China’s domestic semiconductor push:

Huawei has three chip fabs under construction: one could start production by the end of this year, and the other two next year. Together, their capacity would surpass SMIC’s current output on comparable lines.

SMIC plans to double its 7nm capacity next year.

Once Huawei’s fabs come online, smaller Chinese chip designers like Cambricon, MetaX, and Biren will likely get greater access to SMIC capacity.

ChangXin Memory (CXMT) is testing samples of HBM3, aiming for launch next year.

Of course, these forecasts assume China can get enough lithography machines and U.S.-made equipment, and that yields improve quickly, which is far from guaranteed. There’s also some fuzziness around what counts as “advanced nodes” and whether certain lines are really in mass production or just pilot runs. Still, it’s hard to deny that Chinese domestic chip manufacturing is advancing fast.

Meanwhile, a single line from DeepSeek—“UE8M0 FP8 is designed for the next-generation domestic chips”—triggered an epic rally in Chinese chip stocks. The background behind this sentence has always been a bit of a mystery, and it’s hard to know what the real intention was. To me, it probably doesn’t mean what many people assume—that DeepSeek has decided to go all-in on backing domestic chips.

But honestly, that doesn’t matter anymore.

Last Friday, the A-share index shot past 3,800 points, and Cambricon’s share price even overtook Kweichow Moutai, making it the most expensive onshore stock in China. The general consensus forming inside China seems to be: under the “AI+” push, domestic AI has real potential. Crucially, Chinese AI is moving into a software-hardware co-development phase, which could reduce reliance on Nvidia, AMD, and other foreign compute suppliers.

On August 27, at the opening of the 4th 828 B2B Enterprise Festival, Huawei board member and President of Quality, Processes & IT Tao Jingwen gave a keynote. He said:

Hardware companies led by Huawei should already be able to largely overcome the compute bottlenecks imposed by the U.S. on China. China also has excellent large-model firms like DeepSeek. Our model competitiveness is already on par with U.S. players.

Tao also stressed that

Huawei has built a fully independent ecosystem, from compute to databases to toolchains, including the fully open-sourced CANN toolkit released last month. On Huawei Cloud, enterprises can access ModelArts, DataArts, MindIE, and more—so that no matter how complex the model, or how advanced the application, they can train and deploy quickly.

The 828 B2B Festival, first launched in 2022, is China’s first digital empowerment event for the B2B space, initiated by Huawei Cloud and partners. This year’s edition is the fourth. Tao pointed out that AI investment is expensive, especially for SMEs and local governments, and said Huawei hopes to make Huawei Cloud a “fertile black soil” for digital and intelligent transformation—offering ready-made data engineering, model engineering, and toolchains, so companies can focus on building and applying AI quickly.

Just before this event, Huawei Cloud was caught in a controversy over “model plagiarism.” In short, Huawei had multiple teams working on large models. The teams doing the hard, ground-up work moved more slowly, while another team simply repackaged Alibaba’s “Qianwen” model under a new name and grabbed the spotlight, sidelining the real builders. Senior leadership became aware but let it slide. When the issue came to light after open-sourcing, the lead of the genuine R&D team, worried about embarrassment and career risks, stepped forward to expose the truth.

Not long after, reports emerged that Huawei Cloud announced a major restructuring, cutting and consolidating departments that could affect thousands of staff. Post-reorg, Huawei Cloud will focus on a “3+2+1” business mix: the “3” being general compute, AI compute, and storage; the “2” being AI PaaS and databases; and the “1” being security.

Huawei hasn’t officially commented, but sources close to Huawei Cloud confirmed to Chinese media that the shake-up aims to align software-hardware integration and architectural innovation with Huawei’s core compute business, especially Ascend AI. The goal is clear: tighten spending and move toward profitability, under growing financial pressure.

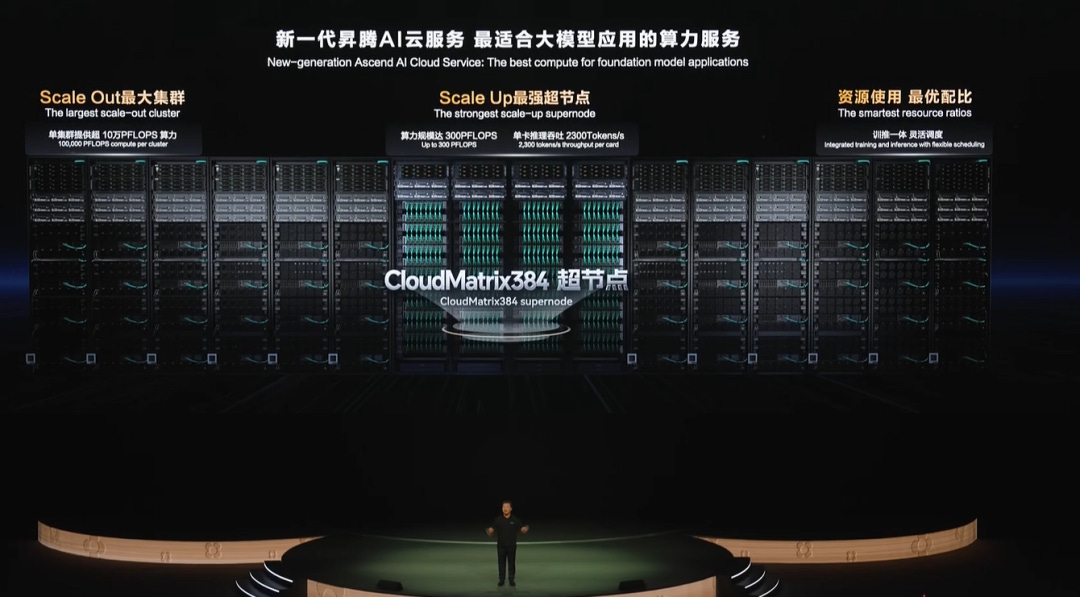

At the 828 Festival, Huawei Cloud announced its Tokens service now plugs into the CloudMatrix384 super-node, using the new xDeepServe architecture to split MoE models into separate Attention, FFN, and Expert micro-modules, distributing them across NPUs for parallel execution. After tuning, throughput improved from 600 tokens/s on a non-supernode single card to 2,400 tokens/s, with latency down to just 50ms.

According to Chinese media, Huawei Cloud’s MaaS platform now supports most of the leading models—DeepSeek, Kimi, Qwen, Pangu, SDXL, Wan—as well as popular agent platforms like Versatile, Dify, and Koozi.

On August 28, the China International Big Data Industry Expo was held in Guiyang, Guizhou Province. At the opening ceremony, Zhang Ping’an, Huawei Executive Director and CEO of Huawei Cloud, stated that in the face of computing power demand expected to grow tens of thousands of times over the next decade, Huawei Cloud will remain committed to building a “fertile soil for computing power.”

According to Zhang Ping’an, Huawei Cloud has been expanding its computing power at a remarkable pace over the past year, with total capacity increasing by nearly 250% year-on-year. The number of customers using Huawei’s Ascend AI cloud services has also surged from 321 to 1,714.

On the infrastructure side, Huawei Cloud has deployed the country’s largest CloudMatrix384 supernode in Gui’an, making it a flagship project of China’s “East-to-West Computing” initiative. It has also built massive disaster recovery cloud centers in Gui’an and Ulanqab to provide fast, stable, and highly reliable computing support for enterprises—especially central state-owned companies. Today, Huawei Cloud powers a wide range of industries, including finance, large AI models, intelligent driving, the internet, consumer electronics, and embodied intelligence. For example, leading Chinese financial institutions rely on Ascend to stably support over 1,000 AI agents every day.

How does Huawei achieve both higher performance and larger scale? Zhang explained that the company leverages a “big mix” of strengths—integrating technologies across optical communications, networking, and power supply. By using systems to reinforce weak points, trading space, bandwidth, and energy for computing power, and harnessing large-scale cloud clusters, Huawei Cloud achieves performance gains and scale advantages.

In April this year, Zhang Ping’an said that in the AI era, Huawei Cloud will firmly commit to building an “independently innovative and trustworthy” foundation for AI compute, using Ascend AI Cloud services to accelerate AI development and deployment across industries. At the same time, it will stay deeply focused on verticals, using the Pangu large model as its engine to create industry-grade to-B solutions that can reshape thousands of sectors. Huawei Cloud will also keep strengthening its ecosystem, working with partners and customers to explore the vast opportunities of AI together.

Earlier in July of 2024, Zhang proposed that China should not rely solely on the most advanced AI chips but should leverage its advantages in bandwidth, network, and energy to address AI computing power issues through architectural innovation in the cloud.

Full transcript of Tao Jingzhou’s keynote speech is available:

This year marks the 11th Big Data Expo, and also the third year that the 828 B2B Enterprise Festival has taken place here in Guizhou. Over these years, we’ve all experienced the shockwaves of digital transformation and the leap forward in AI technology. New applications keep emerging, and they’re accelerating the reshaping of every industry. So, how can we seize the opportunities of the AI era? I’d like to share Huawei’s perspective, drawing on our own practice, technological innovation, and ecosystem building—using the 828 B2B Festival as a platform.

I’ll keep it to three main points.

First, AI is entering a historic stage of development. Intelligent applications have now become part of enterprise production systems, and how enterprises apply AI will determine whether the technology delivers real value. Two years ago, when large models came onto the scene, AI became the talk of the town. At first, everyone scrambled for compute power—so much so that it led to U.S. restrictions on AI chips to China and a limited supply of Nvidia products. Then, last year, the focus shifted to competing on models, and within six months, hundreds of new Chinese large models appeared. Now, we see the industry returning to rational questions: What value does AI actually bring to society and to business? Can AI deliver concrete outcomes inside enterprise systems?

I often say AI is both a revolution in tools and a tool for revolution. Just like the steam engine during the Industrial Revolution—the opportunity wasn’t the steam engine itself, but what it enabled across transport and industry. In the same way, AI must become the new productivity tool for enterprises. Without real-world, scenario-driven value, AI is meaningless.

At Huawei, I’m responsible for our digital and intelligent transformation. Honestly, I’ve been very anxious. This year I’ve been asking myself: what’s the real relationship between today’s AI and the years we’ve spent on IT and digitalization? Does AI mean all the ERP, MES, CRM systems we built are now obsolete? Of course not. The question is: what new value can AI add to our business? Huawei is an innovation-driven company—half our people are in R&D. With over 100,000 researchers, if AI were only about cutting jobs, who would embrace it? Instead, we want AI to boost productivity, not reduce people.

So our thinking is: in this era of massive AI change, enterprises must find certainty amid uncertainty. Choose the right business scenarios, use AI tools effectively, and make sure AI value lands and creates returns.

From Huawei’s experience, we’ve developed an engineering methodology we call “three layers, five integrations, eight steps.”

The three layers: pick the right scenario, deliver it quickly, and ensure it creates real business value.

The five integrations: AI must mesh with existing organizations, processes, IT, data, and people—it’s not about tearing everything down.

The eight steps: a set of concrete actions, but the key is still choosing the right enterprise scenarios.

Right now, most companies face the same problem: everyone is rushing into AI all at once. Society is like this, and so are enterprises. I oversee AI across the company, and last year alone Huawei submitted 1,680 different AI application proposals to my team. But I only have 3,400 people—there’s no way we could take on that many projects. That’s why I’ve been saying the entry point has to be small, but the depth has to be big—“one centimeter wide, ten thousand meters deep.” You’ve got to focus on the company’s core business scenarios. For us, that meant picking things like sales contracts and order processing. Today, about 18% of Huawei’s code is written by AI, and most of our testing and documentation is AI-generated. But we didn’t use AI to cut staff—we integrated it into the workflow so our developers could focus on higher-value work.

Take contracts as another example. A contract needs a precise, serious answer, and that means you have to find the right AI solution for that exact scenario. At Huawei, we do business globally, with around 200 million customs clearances every year. The prep work for those filings is extremely complicated. On top of that, we handle hundreds of thousands of contracts a year, all of which carry risks and require clause reviews. We’ve applied AI deeply in these areas, turning it into a tool our business teams can’t live without and boosting output significantly. That’s our goal: scenario-driven AI that builds on the IT and data foundations companies already have, combines them with large models, and ensures real business value. In the past IT was about iterative development—now IT teams need to work hand in hand with business experts to solve industry-specific problems.

One more example: our manufacturing lines. Operators on automated lines have an annual turnover rate of 19–26%. Each shift has about 6,000 workers, and with three shifts running 24/7, that’s about 18,000 people. Every year roughly a quarter leave, which means we have to hire and train large numbers of new staff. If the training isn’t solid, when problems pop up and can’t be fixed quickly, the line stops. So we built an AI system that can solve problems from just a photo or a single sentence. It was a small entry point, but it dramatically cut training costs, reduced downtime, and boosted production capacity by more than 20–30%.

This is my first point: we have to focus on making AI truly land in real business scenarios.

It’s not just about raw compute power. Good AI comes from the effective combination of business scenarios, compute, models, and data. For hardware, companies like Huawei have already made big strides—China should be able to largely overcome the bottlenecks created by U.S. restrictions. On the model side, we now have strong players like DeepSeek, and China’s large-model competitiveness is no longer behind the U.S. What’s more, China has the world’s richest real economy and business scenarios, plus the most complete data accumulation. If we push in the right direction, I believe China can surpass the U.S. in AI applications.

My second point is about Huawei Cloud—especially our effort to make Guizhou the hub of China’s computing power.

Right now in Guizhou, we’re strengthening Huawei Cloud so it can serve as the “fertile black soil” for digitalization and intelligence, building it into China’s powerhouse for AI compute. By August, we had already deployed 75.8 EFLOPS of computing power here, with more than 238,000 Ascend AI cards. AI is expensive, especially for SMEs and local governments, so our goal is to make Huawei Cloud the foundation where data engineering, model engineering, and toolchains are all ready-to-use—so companies can focus on building AI applications quickly and effectively.

Huawei Cloud has three major bases in China, and Guizhou is now the biggest, strongest, and best environment. What matters in AI isn’t single-card performance, but application results. Back in early 2024, daily token consumption in China was about 100 billion; now it’s over 30 trillion a day—a 300x increase. Very soon, token consumption itself will be the key measure of AI application results. That’s why we want Huawei Cloud to integrate domestic compute, data engineering, toolchains, and Chinese models into a single, easy-to-use platform. From idea to implementation, developers should be able to build AI applications that are simple, fast, and deliver value right away.

Huawei has built a fully independent ecosystem that doesn’t rely on the U.S.—from computing power and databases to toolchains, including last month’s fully open-sourced CANN toolkit—aiming to make a solid contribution to the development of China’s AI.

On top of that, we provide ModelArts (for models and AI services), DataArts (for data management), and MindIE (for inference). Huawei Cloud integrates the widest range of models in the world, including dozens of validated autonomous driving models, so we can deliver complete solutions to industries like automotive and help them quickly develop intelligent applications. Whatever the complexity of the model or the advancement of the use case, our tools are designed so enterprises can train, infer, and deploy quickly.

Third, with the 828 B2B Festival, Huawei aims to open this ecosystem to partners and enterprises across China. Over three years, more than 100,000 companies have participated. This year’s theme is enterprise AI application landing. We’ve upgraded our model service platform to support the latest, widest range of Chinese models, with 0-Day integration—so when a new model like DeepSeek launches, enterprises can test it on Huawei Cloud right away. We’re also supporting multiple Agent platforms and flexible token services.

During the festival, more than 12,000 digital products will be launched on the 828 one-stop enterprise application marketplace. Over 600 high-quality AI solutions covering cloud migration, AI applications, digital transformation, and enterprise intelligence will be shared with businesses.

AI is the ultimate tool of our era. It will deliver exponential gains in efficiency, but we must keep optimizing the platforms and ecosystems where AI is applied. At this historic starting point, we hope more Chinese enterprises will ride the wave of the AI era, building the competitiveness needed for high-quality growth. And we call on all sides to join hands in creating an open, collaborative digital ecosystem—so together, Chinese enterprises can innovate and lead in the AI age.

Huawei Cloud’s Press release on Zhang Pingan’s speech:

Leveraging a “Hodgepodge” Advantage to Build a Fertile “Black Soil” for Compute Power

Compute power is the basic infrastructure of the intelligent world. Large AI models have driven demand for massive compute, and in the next decade, demand could grow by tens of thousands of times.

According to Zhang Ping’an, Huawei Cloud has been firmly committed to building this “fertile black soil” of compute. Centered on three key hubs—Gui’an, Ulanqab, and Wuhu—Huawei Cloud is working to create a nationwide compute network. China’s “black soil” for compute is quickly becoming an AI compute field that serves global customers. By August, Huawei Cloud’s overall compute scale had grown nearly 250% year over year, with the number of customers using Ascend AI Cloud services increasing from 321 last year to 1,714 this year.

In Gui’an, Huawei Cloud has deployed its largest CloudMatrix384 super-node, serving customers nationwide and setting a benchmark for the East-to-West Computing Project. Huawei has also built massive disaster-recovery cloud centers in Gui’an and Ulanqab to provide high-performance, stable, and reliable compute services for enterprises, especially state-owned companies.

How can performance and scale be improved? Zhang said Huawei leverages its “hodgepodge advantage”—integrating expertise across optical communications, networking, and power. By supplementing weak points with system-level design, and trading space, bandwidth, and energy for compute, Huawei Cloud achieves both scale and performance advantages through large compute clusters.

In April, Huawei Cloud launched the CloudMatrix384 super-node in Wuhu. By interconnecting 384 Ascend NPUs and 192 Kunpeng CPUs with the new MatrixLink high-speed, full-mesh network, Huawei created a super “AI server” with 300 PFlops of compute. For trillion-parameter model training, up to 432 of these super-nodes can be horizontally scaled, forming a 160,000-card AI cluster—capable of training 100B-parameter models simultaneously for over 1,300 instances.

Huawei Cloud now provides highly competitive compute services to industries from finance, smart driving, and large models to internet, consumer electronics, and embodied intelligence. Top Chinese financial institutions, for example, use Ascend to reliably support more than 1,000 AI agents daily.

Whether online or offline, Huawei Cloud offers a unified, diversified compute architecture to support both training and inference. From autonomous driving to robotics to enterprise IT systems, Huawei is embedding Ascend into production systems, accelerating industry-wide intelligence upgrades.

Ascend AI Cloud Services × Tokens Services: Accelerating Intelligence Across Industries

“High-quality datasets determine how good an AI model is,” Zhang noted. “Today, many companies already have data warehouses and lakes for BI, but these platforms aren’t optimized for AI models. There’s still a lot of preparation and knowledge extraction needed.”

To address this, under the guidance of the National Data Bureau, Huawei Cloud is working with cities, enterprises, and partners to create a new paradigm of trusted AI data spaces. Based on real-world scenarios, these spaces feature all-domain ingestion, AI-readiness, and trusted circulation. This enables enterprises to use their accumulated large-scale data to automatically build knowledge graphs, and allows business staff to quickly develop AI agents with enterprise-level large models.

Zhang stressed that Huawei Cloud is committed to building this fertile “black soil” of compute. With Ascend AI Cloud Services and Tokens Services, it delivers the “final compute results” customers need. The Tokens Service shows outstanding performance in high-throughput scenarios: with CloudMatrix384, latency can be kept at 50ms while throughput reaches 2,400 TPS—an industry record. Beyond Huawei’s Pangu models, Huawei Cloud also supports leading open-source models such as DeepSeek and Kimi, enabling them to run faster and better on Ascend Cloud.

Embracing AI-Native Thinking to Seize the Intelligent Era

With support from customers, partners, and developers, Huawei Cloud continues to grow steadily, leading in multiple core industries. It ranks No. 1 in government, industrial, financial, and automotive markets, and is a leader in healthcare, pharmaceuticals, and meteorology. In areas like containers and databases, Huawei Cloud has entered Gartner’s Magic Quadrant.

So far, Huawei Cloud has maintained a record of zero major accidents for 756 consecutive days. “We believe safety, stability, quality, and continuous innovation are the key reasons customers choose us,” Zhang said.

He pointed out that when the steam engine was invented, people once tried to put it on a tricycle—which delayed the invention of trains by forty years. The lesson: in the AI era, we must embrace AI-native thinking. We need to design applications, data, processes, and organizations around AI from the ground up.

“Today, humans use silicon-based machines as tools; AI is the assistant. In the future, AI may become the primary executor of tasks, with humans managing it, turning it on and off. For companies looking to build an edge with AI, the only way to fully unleash its potential—improving efficiency, creating new business models, and seizing the opportunities of the intelligent era—is to embrace AI-native thinking,” he concluded.

Excellent as usual...all complicated issues with hype and lack of clarity around issues such as domestic semiconductor capacity for "advanced nodes", depending on how they are defined. Agree that there is a window here for Nvidia in China. No one mentioned all the Jetson Thor sales, but the big money is in inference, and if domestic support for F8 picks up (for training), and Ascend Cloud Matrix is good enough for inference, then where does Nvidia play with downgraded GPUs? No one in Washington knows, and Jensen also does not know how far/long he and David Sacks can push the "support or addict" China's 50 percent of global AI developers...eventually, something has to give with a complete rethink of export controls on leading GPUs, which I support but the narrative in DC does not...